Where Twisted Light Could Help Detect Drones

- Trevor Alexander Nestor

- May 4

- 6 min read

Updated: 7 days ago

Light can be made to carry orbital angular momentum. The wavefront spirals around the propagation axis like a corkscrew, and the beam profile takes on a characteristic doughnut shape with a dark center. This is "OAM light," and it has a property that makes it interesting for sensing rotating things: when an OAM beam reflects off a smooth rotating surface, it picks up a Doppler shift proportional to the OAM order times the rotation rate, separately from any standard linear Doppler shift the target produces.

This effect was experimentally demonstrated in 2013. Lavery and colleagues spun a uniform diffuser at known rates, illuminated it with OAM beams of different orders, and read off the rotation rate cleanly from the rotational Doppler line. The whole illuminated surface contributed to the signal, the line was sharp, and the technique scaled with the beam order. It was a clean physics result that opened up a real engineering question: where else can this be useful?

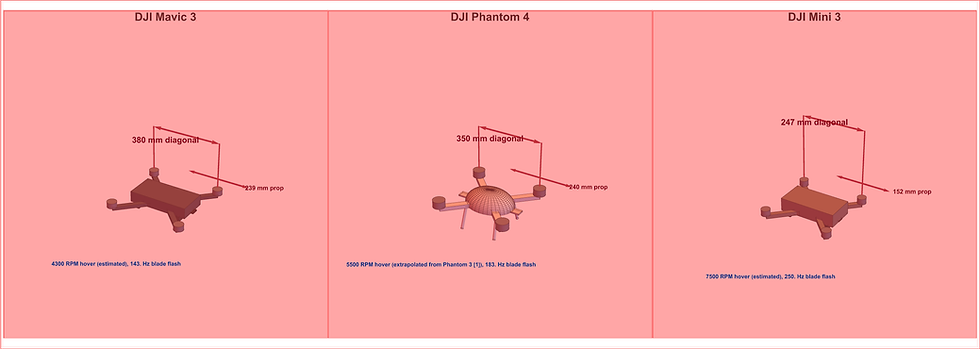

Small drones are an obvious candidate. They have rotors that spin at known rates. Different drone models hover at different RPMs. The rotational Doppler shift, if you can extract it, becomes a direct readout of which drone you're looking at. The question is which configurations of an OAM-LIDAR system would actually deliver that readout, and the answer turns out to be more specific than the general enthusiasm for OAM-based remote sensing might suggest.

I want to walk through four scenarios where OAM light has a credible path to helping with drone detection, and what would need to be true for each to work.

Coherent rotational Doppler from rotor disks

The cleanest case is the direct application of the Lavery effect to drone rotors. If you can produce coherent reflection from a substantial fraction of a rotor disk's surface, the rotational Doppler shift becomes a sharp spectral feature at the OAM order times the rotor's angular velocity. For a Mavic 3 hovering at 5000 RPM with an ell-equals-five probe, that's a 417 Hz line. For a Mini 3 at 6040 RPM with the same probe, it's 503 Hz. For a Phantom 4 at 5500 RPM, 458 Hz. The lines are well separated, scale linearly with rotor speed, and live cleanly above the low-frequency clutter where atmospheric scintillation lives.

The catch is the word "coherent." Rotational Doppler requires the rotor surface to act as a coherent reflector, not as a chopping mask. At near-infrared wavelengths around 1.55 micrometers, drone rotor surfaces look very rough; speckle dominates and the rotational Doppler line gets buried. At longwave infrared wavelengths around 10 micrometers, the same surfaces look much smoother relative to a wavelength, the speckle cells are larger, and a much greater fraction of the surface contributes coherently. A LWIR OAM-LIDAR system targeting drone rotors at moderate range is the configuration where rotational Doppler should work.

The other engineering knobs that move the needle in the right direction are range and target size. Shorter range means less atmospheric phase scrambling between transmitter and target, which preserves more of the OAM mode purity. Larger rotors mean more coherent surface area at any given wavelength. A system designed for fifty-meter range against the Mavic 3 class of drone is much friendlier to rotational Doppler than a system designed for two-hundred-meter range against the Mini 3 class.

Static azimuthal shape classification

Drones are not rotationally symmetric. A quadcopter has four arms in a cross pattern. A hexacopter has six. A drone with a forward-facing camera gimbal has a clear front-back asymmetry. A drone with side-mounted antennas has a different azimuthal signature than one without. These features persist whether the drone is hovering, moving, or even when the rotors are stopped.

An OAM-resolved measurement is a Fourier decomposition in the azimuthal direction. A target with a strong four-fold symmetry will deposit power preferentially into ell-equals-zero, plus-or-minus four, plus-or-minus eight, and so on. A target with three-fold symmetry will look different. A target with a forward-back asymmetry but no rotational symmetry will look different again. The power spectrum across OAM modes is, in effect, a fingerprint of the target's azimuthal shape.

This is conceptually different from the rotational Doppler case. Rotational Doppler reads dynamics; static azimuthal classification reads geometry. The two are complementary. A practical sensor could use one or both depending on whether the drone is hovering or maneuvering, and depending on the rotor speed regime.

The implementation is also less demanding than rotational Doppler in one important way: it doesn't require coherent reflection from the rotor surface. Static azimuthal shape sensing can work on the integrated speckle pattern, because what you're measuring is the azimuthal distribution of return power, not the phase coherence of any particular surface element. This is a more forgiving regime experimentally.

The constraint here is angular resolution. The OAM beam needs to spatially resolve the drone's azimuthal features, which means the beam waist at the target needs to be comparable to the drone size. This pushes toward shorter range or larger optics. For a 350 millimeter quadcopter at 100 meters, you need beam optics that produce a meter-class beam waist on target, which is achievable but constrains the system design.

Clutter rejection through mode filtering

Drone detection in operational settings rarely happens against a clean background. The drone might be flying in front of trees, against an urban skyline, near a sun glint off a window, or against a fast-moving cloud edge. The detection problem is often signal-to-clutter limited rather than signal-to-noise limited, and the question becomes whether you can distinguish a drone return from the background clutter that's also showing up in your sensor.

OAM-resolved reception offers a potentially useful angle on this. Most natural backgrounds (vegetation, terrain, atmospheric scattering) tend to deposit their power preferentially into low-order OAM modes. Diffuse reflection from a rough natural surface tends to look approximately like a Gaussian return, which is mostly ell-equals-zero with some spread into low orders. A coherent return from a structured target like a drone, especially one with rotor modulation, can have a more distinctive distribution across higher-order modes.

This means a sensor that integrates only the higher-order mode content might see a much improved signal-to-clutter ratio for drone returns relative to background. The drone return retains its power across the high-order modes; the clutter mostly doesn't show up there. This is a different kind of advantage than the previous two: it doesn't require extracting more information from the drone, it requires rejecting more information from the clutter.

The published literature on OAM-LIDAR has done relatively little work on realistic clutter scenarios. Most simulations are against empty-sky backgrounds. Most lab demonstrations use cooperative targets in controlled rooms. A focused study of OAM-resolved drone detection against realistic outdoor clutter, with vegetation and urban backgrounds, would tell us whether the clutter-rejection mechanism works in practice. The physics suggests it should help; whether the magnitude of the help is operationally significant is an empirical question.

Combined OAM and polarization

Rotor blades have characteristic polarization signatures that depend on their material. Carbon fiber blades produce different polarized return than aluminum or composite blades. Different drone manufacturers use different blade materials, and even within a manufacturer's product line, different drone classes use different blade compositions.

A sensor that resolves both OAM mode and polarization state has access to a much richer feature space than either alone. The OAM modes capture azimuthal structure; the polarization states capture material composition. Different drone classes can be expected to occupy different regions of this combined space, in ways that might separate them more cleanly than either modality on its own.

The underlying physics is sound, and the engineering is reasonable. Polarimetric sensing is well understood, OAM-resolved sensing is well understood, and combining them in a single receiver doesn't require fundamentally new technology.

What would make these work

The thread that runs through all four scenarios is that OAM helps when the underlying physics couples to angular momentum, and when the system is designed so that the angular-momentum coupling survives the round trip from transmitter to target to receiver. The cases above all have one or both of those properties. Coherent rotational Doppler couples directly to angular momentum and works when coherent reflection is preserved. Static azimuthal classification couples to angular structure of the target and works when the beam resolves the target. Clutter rejection works because OAM modes naturally separate structured from unstructured returns. Combined OAM-polarization works because OAM contributes one dimension of structure that polarization cannot.

In each case, the engineering questions are concrete: what wavelength, what range, what beam waist, what integration time, what mode order. These are the kinds of questions that benefit from focused simulation studies and small-scale experimental demonstrations rather than from broad-stroke proposals. The cases where OAM should help with drone detection are not unlimited, but they're also not empty.

Comments