Backpropagation in the Brain Does Not Work Like AI Architectures

- Trevor Alexander Nestor

- 15 hours ago

- 11 min read

The data center arms race is a confidence trick that is doomed to fail due to the underlying physics which makes our brains work differently and orders of magnitude more efficiently.

What makes the human brain different from transformer based neural network architectures? Or, put another way, what gives us consciousness, and therefore the moral standing that flows from it, and can we ever approach this empirically rather than as a matter of faith or pure philosophical speculation?

This is a topic I've discussed with some of the top researchers in this area (Dr. Tuszynski, Dr. Murugan, Dr. Anirban, Dr. Craddock, James Tagg, Dr. Tamburini, Dr. Hameroff, etc). I was invited to present on this topic directly by Dr. Stuart Hameroff at the The Science of Consciousness (TSC) conference this year (though the TSC conference was allegedly shut down due to organizer affiliations to Jeffrey Epstein) and was also accepted to present at the APS/IPI conferences:

The singularity narrative is upside down

Large technology companies would like you to buy, on faith, the idea that we are approaching a so-called technological singularity at which machines will outwit the masses, after which we will, in some vague way, be enslaved by them. The narrative is then used to rationalize giving these systems agency, rights, and an ever-larger share of the world's energy and capital.

I want to make the opposite argument. The real singularity, if you want to use that word, is the point at which our collective intelligence (CI in the academic literature) catches up to and then surpasses the games being played on us by the people pushing this story. It is the moment we discover we have been outwitted the entire time, and not by the machines.

Information throughput in groups scales faster than per-unit-of-energy performance scales in transformer-style surveillance architectures. The latter is derivative and runs into asymptotic limits, a fact that Joseph Tainter described in The Collapse of Complex Societies without ever needing to mention GPUs. The former is what researchers studying interbrain synchrony are quietly documenting in lab after lab. Group cognition is not a metaphor. It is measurable, and it grows in a way that transformer architectures do not.

So why are we propping up the entire economy on the opposite assumption? Why are we taking seriously Mark Zuckerberg's stated ambition for data centers the size of Manhattan, or Google's reported plans for orbital data centers with their own dedicated power plants, when by many credible estimates the human brain is hundreds of thousands of times more efficient at compute than anything we are currently building, and when we cannot even reliably house, feed, or educate our own people?

Yann LeCun and others have proposed so-called world models, in which AI-endowed robotic agents are placed into society and taught to take human jobs. Years and oceans of training data later, we still do not have reliable self-driving cars, and we do not have the patience or resources to raise our own children. There is a tell in there, somewhere, if you are willing to look at it.

The 2024 Nobel Prize in Physics, awarded to John Hopfield and Geoffrey Hinton for work on machine learning, has come in for serious criticism along these lines. The complaint is not that the work is uninteresting. The complaint is that it does not teach us about the laws of nature in the way a physics prize is supposed to. It is computer science with a heuristic architecture imposed on it, evaluated by humans with attention in the loop, who are also the ones who decide what the simulation means. Nature has not been falsified. Nature has been bypassed. Some have gone further and argued that the prize is being used to rationalize the AI surveillance bubble that has been under construction for at least as long as I have been thinking about consciousness.

How I got here

In 2010, against the views of Stephen Hawking at the time, I had a debate with one of my colleagues at UC Berkeley in which I used Gödel's incompleteness theorem to argue, skeptically, that whatever the brain is doing to generate consciousness probably implicates new physics, or is at least non-algorithmic, and therefore cannot be simulated by any computer. Or, more carefully, any simulation would be too lossy a compression to be useful.

Shortly after, I encountered the work of Roger Penrose, who had reached essentially the same conclusion from Gödel and gone further, implicating quantum gravity and macroscopic quantum-like effects in brain tissue. The standard objection is obvious. Wet, warm, noisy environments should decohere any such effect long before it could matter. I considered that a serious problem for the original formulation of the theory, but I was intrigued enough to keep going.

The next year I started studying quantum gravity and lattice mathematics under Richard Borcherds, the Fields medalist who proved the monstrous moonshine conjecture. In 2018, when I was in Boulder, the National Institute of Standards and Technology formalized post-quantum cryptography on the basis that certain lattice problems, the shortest vector problem in particular, are NP-hard under random reductions. Under known assumptions of either classical or quantum physics, they are intractable. That mattered to me for an old reason. It has always struck me as too hubristic a request of the universe that any class of unbreakable encryption should exist above scrutiny, available to a privileged group of elites for the hoarding of secrets.

Here is where it gets interesting.

Four problems, one shape

If you read the literature carefully, you find that four apparently distinct problems have all been framed as related, and in some cases as equivalent.

The black hole information paradox.

Post-quantum cryptography.

The shortest vector problem on a high-dimensional lattice.

The hard problem of consciousness, and the related binding problem.

This is not a fringe claim. It is in the literature. And it has practical consequences. The research of a physicist working on the black hole information paradox can, at least in principle, be quietly redirected toward developing cryptography. The work of a mathematician on string theory or lattice mathematics can be quietly redirected toward AI surveillance systems. The researchers and the public need not be told.

In each case, the underlying physics for resolution is not well-established or widely known. In the black hole case, information falls in but quantum mechanics insists unitarity is preserved. The hard problem of consciousness has been mapped, by researchers like Susskind and others, onto the shortest vector problem over a high-dimensional lattice or its geometric equivalent, a non-commutative torus. Both of those structures appear empirically in the brain. The Blue Brain Project found them. So did Edvard and May-Britt Moser, along with John O'Keefe, in their work on grid cells, for which they shared the 2014 Nobel Prize in Medicine.

When researchers actually went after the black hole information paradox, monstrous moonshine and macroscopic black hole entropy turned out to be linked through holographic descriptions of black hole microstates. Several modern theories of quantum gravity now treat gravity itself as an entropic or thermodynamic force. The Cardy formula gives the asymptotic density of states in a 2D conformal field theory, providing a microscopic derivation of the Bekenstein-Hawking entropy. To resolve the paradox of information that must be both publicly hidden and uniquely accessible, the theory of secret black hole information islands was introduced. These are regions inside the event horizon that, on recent quantum gravity accounts, are holographically encoded in, and possibly entangled with, the leaking Hawking radiation. They follow the unitarity-preserving Page curve.

Notice the shape of this. Information is trapped, and yet it escapes, encoded, in something that radiates out. That is a Cartesian duality if you want to read it that way. The hidden islands of entanglement entropy are where the mind is stored, apart from the body of the black hole. That same shape may be exactly what we need to understand the brain.

What we know about the brain that does not fit the transformer story

Let me list the things we know empirically that the dominant AI story does not account for.

Information and memory in the brain are processed non-locally, distributed across tissues, and not stored in localized binary logic gates the way a von Neumann architecture stores them. The speed of behavior and information retrieval seems to outrun what standard electrochemical signaling across dendritic membranes can permit on its own.

Backpropagation in the brain, also known as the weight transport problem or credit assignment problem, has no widely accepted, biologically plausible mechanism. In artificial networks we cheat. In biological tissue, no one has produced an obviously correct account of bidirectional feedforward and feedback signaling.

Hyperscanning studies show that brain activity synchronizes across people sharing a social environment, and that this synchronization correlates with shared understanding and empathy. Group performance, in many tasks, scales faster than the sum of individual performances. Transformer architectures do not have this feature. They are not getting it.

Empirical studies of human decision-making show interference patterns that look more like the mathematics of non-classical physics than like classical probability. Psychedelics produce conformal fractal patterns across scales in the visual field, again more consistent with non-classical geometry than with classical signal processing. Single-celled organisms display behaviors complex enough that you would expect them to require a brain. The energy efficiency of the brain alone tells you that purely electrochemical signaling cannot account for perceptual binding.

Inside the cytoskeleton of cells are long cylindrical proteins called microtubules, which anesthetics selectively block. Xenon anesthetics have been tested with different xenon isotopes, and anesthetic potency varies with the isotope. That should stop you in your tracks. Different isotopes of the same element, with the same chemistry, produce different effects on consciousness. The natural reading is that consciousness is partly generated by non-classical means involving spin dynamics, consistent with the radical pair mechanism known from quantum biology.

Ultraviolet super-radiance has been measured in brain tissue in some newer (and admittedly contested) studies. Researchers including those I have spoken with at length suggest that microtubules act as time-crystalline optical waveguides. Light has been shown to modulate long-term potentiation and long-term depression. Ultra-weak photon emission from isolated neurons correlates with action potential firing. There are even more fringe studies suggesting superconductivity or near-superconductivity-induced effects in microtubules. Those last claims need much more experimental work, and I will not defend them past saying they should not be dismissed without that work.

The model

Penrose's original orchestrated objective reduction theory has had problems with experiment. But recent work in quantum biology suggests that macroscopic quantum effects in the warm wet noisy brain might be possible after all, through periodic driving into Fröhlich condensates, or through topological protection, both of which are under active investigation at the major tech companies. (Microsoft, where I once worked, has invested heavily in Majorana physics for exactly these reasons. The work of James Tagg and Dr. Kerskens ties this directly into the model I am about to sketch.)

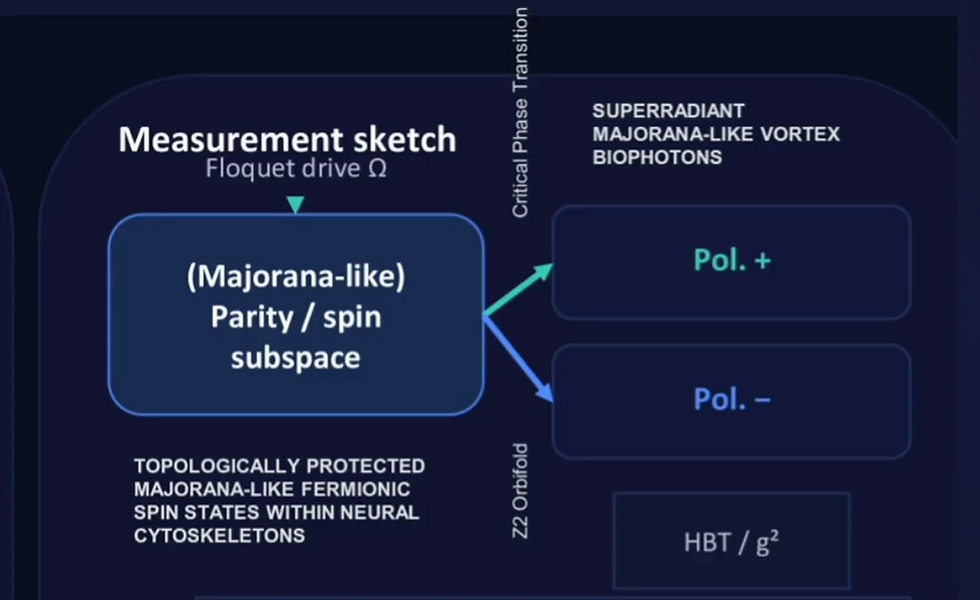

In our model, information stored in Majorana-like fermionic spin states hosted within microtubules is orchestrated to saturation. At a critical fixed point, a tipping point, the information bosonizes into light-like modes, manifesting as cascades of super-radiant ultra-weak Majorana-like vortex biophotons. These collapse the evolving superposition. The collapse is triggered gravitationally. The phase transition itself is captured mathematically by Z2 orbifolds.

I once discussed with Edward Witten his proposal that pure gravity in anti-de Sitter space (a spacetime of negative curvature) might be described by the monster conformal field theory, which describes massless bosons. Z2 orbifolds are how you transition from such a CFT to a fermionic spin system in de Sitter space (positive curvature), like the so-called baby monster CFT. These are real mathematical objects. In quantum gravity models, Z2 orbifolds are fundamental in constructing Israel junction conditions, the rules for gluing two spacetime geometries together.

The Riemann zeta function and its generalizations, like the Epstein zeta function for high-dimensional lattices, are used in this setting to regularize divergent vacuum energy and define partition functions. In experiments, the zeros of the Riemann zeta function can be reproduced by periodically driving qubits. Mathematical physicists like Dr. Tamburini, with whom I have had many long conversations, have shown you can describe the behavior of particles that are their own antiparticles (Majorana fermions) on curved spacetime using the zeta function. The critical line marks the saturation point in the statistics of these systems. It also turns up in tipping points for macroscopic quantum-like behavior, including quantum chaos and fluid turbulence. This is more or less exactly what the Hilbert-Polya conjecture proposes, that the zeros of zeta could be the energy levels of some unknown quantum system. We may be looking at one.

In loop quantum gravity, the spacetime substrate is a spin foam network. Causal fermion systems theory uses similar graph structures to quantize spacetime. These look extraordinarily like the spin-state networks in the brain. Scott Aaronson once proposed to me that gravity and spin foams might support a kind of non-computable calculation, which is essentially Penrose's proposal again. Penrose has further suggested that a complete theory of quantum gravity will be written in the mathematics of null light geodesics, sometimes called soft hair in twistor theory. The monster vertex operator algebra, corresponding to the monster CFT, maps cleanly to twistors. Those light-like modes are analogous to the hidden islands of entanglement entropy I mentioned earlier in the black hole context. They are how the mind, in this picture, attaches to the body of the neural network.

If you have been keeping score, that is a single picture in which the black hole information paradox, the shortest vector problem, post-quantum cryptography, and the hard problem of consciousness are all aspects of the same physics.

A falsifiable prediction

I do not currently have funding for this work, but have been in active discussions with research groups that have been interested in collaboration. It seems the available funding is mostly directed toward perpetuating the status quo. So what I can offer at the moment, in lieu of an experiment, is a numerical simulation and a falsifiable prediction.

If the model is correct, information stored in Majorana-like spin states within microtubules should imprint onto super-radiant ultra-weak biophotons. Spectral analysis of those photon signatures could provide a method of post-quantum cryptanalysis. Specifically, the smallest eigenvalue of the Dirac-like operator spectrum over the relevant space corresponds to the shortest vector of the high-dimensional lattice, or the non-commutative torus, that any given neural network represents. There is already related work attacking the shortest vector problem with spin-glass and folded spectrum methods. The proposal here is to drive a neural network representing such a lattice problem to gravitational collapse and read out the geometry from the ultra-weak photon spectra at the phase transition.

If the photons originate from exotic Majorana-like states in the cell, they should carry a quantum fingerprint. A system with conserved parity is linked to the polarization of the photons it emits. From numerical simulations I predict three measurable signatures.

First, Floquet sidebands. Extra spectral lines from periodic driving.

Second, a magnetic field-dependent polarization bias.

Third, strong cross-correlations showing photons alternate polarization in sequence.

Detecting any of these would be strong evidence that biophotons are not metabolic noise but are carrying quantum information from deep inside the cell, and that this is the substrate through which the brain achieves the equivalent of backpropagation. It would also be evidence for what actually distinguishes mind from machine.

Why this matters now

The argument I am making is not anti-technology. It is anti-confidence-trick. We are being asked to spend astonishing amounts of money and energy on an architecture whose advocates cannot tell you, in physical terms, why it should approach what a three-pound piece of biological tissue does for twenty watts. We are being asked to grant moral standing to systems whose advocates have not solved, and in many cases have not seriously engaged with, the physics that would make such standing meaningful.

The serious answer to the consciousness question may not require Manhattan-sized data centers or orbital power plants. It may require something much harder for the present economic order to monetize, namely, a deep and patient interest in what makes us human, and in what allows one mind to connect with another.

Trevor Nestor trevor.nestor at berkeley dot edu

Comments